How To Stay Safe From AI

In today’s rapidly advancing world, artificial intelligence (AI) has become an integral part of our daily lives. From smart home devices to voice assistants, AI is everywhere, making tasks easier and more efficient. However, with the increasing presence of AI, there are also concerns regarding privacy and security. This article aims to provide you with crucial insights and practical tips on how to safeguard yourself from potential risks associated with AI. By understanding its capabilities, being mindful of your data, and staying informed about the latest security measures, you can confidently navigate the world of AI while keeping your personal information safe and secure.

Understanding AI

Artificial intelligence, commonly known as AI, refers to the development of computer systems that can perform tasks that usually require human intelligence. These tasks include speech recognition, decision-making, problem-solving, and language translation. AI systems rely on algorithms and large amounts of data to analyze and learn from patterns, enabling them to make predictions and automate processes. In essence, AI simulates human intelligence by processing vast amounts of data and making informed decisions based on that data.

Definition of AI

AI can be defined as the capability of a computer system to perform tasks that would typically require human intelligence. This includes learning from experience, adapting to new information, and making decisions based on data analysis. AI systems can be designed to perform specific tasks, such as facial recognition or natural language processing, or they can be general-purpose systems that can handle a wide range of tasks. The goal of AI is to create machines that can mimic human intelligence and perform tasks more efficiently and accurately.

Types of AI

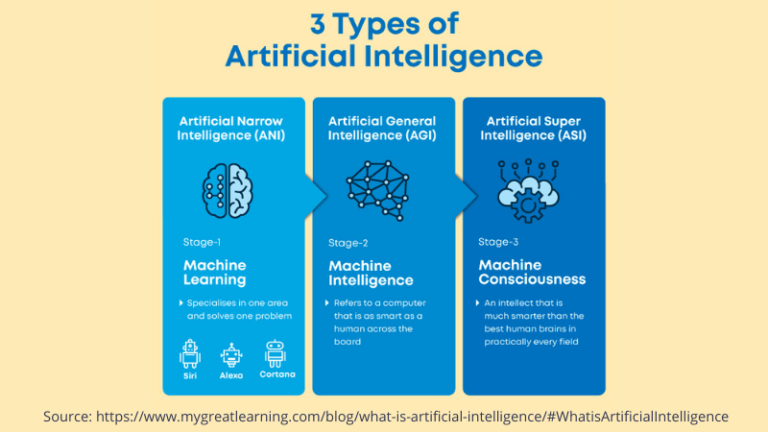

There are various types of AI, each with its own capabilities and applications:

-

Narrow AI: Also known as weak AI, this type of AI is designed to perform specific tasks within a limited domain. Examples include virtual personal assistants like Siri or Alexa, chatbots, and recommendation algorithms used by online platforms.

-

General AI: This is the holy grail of AI development – a system that possesses the same level of intelligence as a human being. General AI can understand and perform any intellectual task that a human can. However, developing truly general AI is still largely a theoretical concept and has not been achieved yet.

-

Artificial Superintelligence: This refers to AI systems that surpass human intelligence in virtually every aspect. Artificial superintelligent systems would possess a level of intellectual ability far beyond what humans can comprehend. The development of artificial superintelligence carries both potential benefits and risks, and it remains a topic of ongoing research and discussion.

Applications of AI

AI has found applications in various industries and sectors, providing valuable assistance and improving efficiency in numerous tasks. Some notable applications of AI include:

-

Healthcare: AI is used in medical imaging to aid in the diagnosis of diseases. AI-powered robots and virtual assistants are also being developed to assist in patient care and perform tasks such as monitoring vital signs.

-

Finance: AI algorithms are used in stock trading, fraud detection, and credit scoring. These algorithms analyze large sets of financial data to identify patterns and make predictions.

-

Transportation: AI is used in self-driving cars to navigate and make real-time decisions. It is also utilized in optimizing logistics and transportation routes.

-

Education: AI-powered tutoring systems and virtual learning platforms use personalized algorithms to deliver tailored educational content. These systems can adapt to individual learning styles and provide personalized feedback.

Potential Threats of AI

While AI offers numerous benefits and opportunities, it also poses potential threats that need to be addressed. It is essential to understand and mitigate these risks to ensure the responsible development and deployment of AI systems.

Job Displacements

One of the biggest concerns surrounding AI is the potential displacement of human jobs. As AI systems become more advanced, there is a possibility that they can replace humans in various tasks and industries. Jobs that involve repetitive tasks or can be automated may be at higher risk. However, it is important to note that AI can also create new job opportunities and transform existing roles.

Privacy Concerns

The widespread use of AI systems generates vast amounts of data, raising concerns about privacy and data protection. AI systems often collect and analyze personal information to make informed decisions and predictions. Therefore, it is crucial to ensure that AI systems adhere to strict privacy regulations and safeguard individuals’ personal data.

Autonomous Weapons

The development of AI-powered autonomous weapons raises significant ethical concerns. Autonomous weapons can make decisions about using lethal force without direct human control. It is crucial to establish international regulations and ethical guidelines to prevent the misuse of such weapons and ensure human accountability in decision-making.

Manipulation of Information

AI algorithms can potentially be exploited to manipulate information and spread misinformation. Deepfakes, for example, are AI-generated videos or images that appear authentic but are entirely fabricated. This poses risks to public trust, political stability, and social cohesion. Implementing robust measures to detect and combat the manipulation of information is essential to maintaining societal integrity.

Protecting Personal Data

As AI systems rely heavily on data analysis, protecting personal data becomes paramount. Here are some measures to consider for safeguarding personal data:

Secure Passwords and Authentication

Creating strong and unique passwords for online accounts is crucial in preventing unauthorized access. Additionally, enabling two-factor authentication adds an extra layer of security by requiring a second form of verification, such as a code sent to a mobile device.

Encryption

Data encryption is essential to protect sensitive information from unauthorized access. Using encryption algorithms and secure communication protocols ensures that data remains confidential and cannot be easily intercepted or deciphered.

Data Minimization

Collecting and storing only the necessary personal data can help minimize the risk of data breaches and unauthorized access. Avoid collecting excessive or unnecessary data and regularly review data storage practices to ensure compliance with privacy regulations.

Use of Virtual Private Networks (VPNs)

When accessing the internet or using AI systems, using a VPN can help protect your privacy and ensure secure communication. VPNs encrypt internet traffic, making it difficult for hackers to intercept or monitor your online activities.

Securing Internet of Things (IoT) Devices

As IoT devices become increasingly prevalent in our daily lives, it is crucial to ensure their security to prevent potential vulnerabilities. Here are some ways to secure IoT devices:

Regular Firmware Updates

Keeping IoT devices up to date with the latest firmware updates is essential. These updates often include security patches that address vulnerabilities and prevent potential attacks.

Strong and Unique Passwords

Setting strong and unique passwords for IoT devices is crucial in preventing unauthorized access. Avoid using default or easily guessable passwords, and consider using a password manager to securely store and manage passwords.

Disabling Unnecessary Features

Many IoT devices come with various features that may not be necessary for your specific use case. Disabling unused features reduces the attack surface and minimizes the risk of exploitation.

Implementing Network Segmentation

Segmenting your IoT devices from your regular home or office networks adds an extra layer of security. This ensures that even if one device is compromised, the attacker’s access is limited and cannot spread to other devices or sensitive information.

Awareness and Education

To stay safe from AI-related risks, it is crucial to be aware and educated about AI capabilities and potential vulnerabilities. Here are some steps to enhance AI awareness:

Understanding AI Capabilities

Gaining a basic understanding of what AI can and cannot do is essential in navigating the potential risks. Knowing the limitations and capabilities of AI systems can help you make informed decisions and avoid falling for misinformation or unrealistic expectations.

Recognizing AI-Powered Systems

Be aware of the AI systems and algorithms that are at work in various applications and platforms. Recognizing when AI is being used can help you assess potential risks and make informed choices about the personal data you share.

Identifying Potential Risks and Vulnerabilities

Developing the ability to identify potential risks and vulnerabilities in AI systems allows you to take proactive measures to protect yourself and your data. Stay informed about the latest AI-related security threats and adopt appropriate countermeasures.

Ethical Considerations

Ethical considerations play a vital role in the development and deployment of AI systems. Here are some ethical guidelines to consider:

Ensuring Transparency and Accountability

AI systems should be designed and deployed in a transparent manner, ensuring that individuals can understand how decisions are made. Establishing accountability mechanisms and making AI systems auditable can help prevent misuse and ensure responsible decision-making.

Adhering to Privacy Regulations

Compliance with privacy regulations is crucial in protecting individuals’ rights and personal data. AI systems should be designed to be privacy-preserving, with clear consent mechanisms and strict safeguards for data handling and storage.

Avoiding Bias and Discrimination

AI algorithms can be prone to biases that reflect human prejudices and stereotypes. Developers should ensure that AI systems are unbiased and do not discriminate against individuals based on factors such as race, gender, or socioeconomic background.

Monitoring AI Systems

Continuous monitoring and auditing of AI systems are essential to ensure their reliability and mitigate potential risks. Here are some practices for monitoring AI systems:

Implementing Robust Validation and Verification Processes

AI systems should undergo rigorous testing and validation to ensure their accuracy and reliability. Verification processes help identify potential biases, vulnerabilities, or flaws in the system’s decision-making.

Regular Auditing of AI Systems

Periodic audits of AI systems help identify any deviations or anomalies that may have occurred during the system’s operation. Auditing can reveal potential vulnerabilities or issues that need to be addressed promptly.

Reviewing AI Decision-Making Processes

Regularly reviewing and evaluating the decision-making processes of AI systems allows for the identification of any biases or discrepancies. Transparency in decision-making helps maintain public trust and ensures the ethical use of AI technologies.

Collaborating with Experts and Authorities

Collaboration with AI specialists, experts, and regulatory authorities is crucial in developing sound AI governance frameworks. Here are some ways to collaborate effectively:

Engaging with AI Specialists

Seeking advice and guidance from AI specialists and experts can help ensure the responsible and ethical development and deployment of AI systems. Collaborating with professionals can provide valuable insights and contribute to the understanding of AI-related risks and mitigation strategies.

Contributing to Policy Development

Active participation in the development of policies and regulations surrounding AI helps ensure that societal interests are adequately represented. Engaging in public consultations, collaborating with policymakers, and contributing to the formulation of guidelines can shape the ethical and responsible use of AI technologies.

Following Recommendations from Trusted Organizations

Keeping up to date with recommendations and guidelines from trusted organizations, such as research institutions or regulatory bodies, provides valuable insights into best practices and risk mitigation strategies. Staying informed about the latest developments in AI governance helps individuals and organizations make informed decisions.

Preparing for AI Disruptions

The rapid development of AI has the potential to disrupt industries and job markets. Here are some ways to prepare for AI disruptions:

Developing Adaptable Skills

Investing in acquiring adaptable skills that complement AI systems can help future-proof job roles. Skills such as critical thinking, creativity, complex problem-solving, and emotional intelligence are difficult to replicate with AI, making them valuable assets in a changing job market.

Promoting Job Reskilling

Organizations can facilitate reskilling and upskilling programs to prepare employees for the future of work. Offering training opportunities in emerging fields related to AI and automation can help individuals transition into new roles and remain relevant in the job market.

Creating Safety Nets and Social Support Mechanisms

As job markets evolve due to AI disruptions, it is essential to establish safety nets and social support mechanisms to ensure individuals are not left behind. Policies such as universal basic income or job transition programs can provide a safety net during transitional periods and support individuals in adapting to changing job markets.

Development of AI Governance

The development of robust AI governance frameworks is crucial in ensuring the responsible and ethical use of AI technologies. Here are some key considerations for AI governance:

Establishing Ethical Guidelines

Clear and comprehensive ethical guidelines provide a foundation for responsible AI development and deployment. These guidelines should address issues such as privacy, transparency, accountability, fairness, and the prevention of misuse or harm.

Regulating AI Development and Deployment

Regulatory frameworks should be established to govern the development and deployment of AI systems. These regulations should address safety, privacy, security, and accountability, promoting the ethical and responsible use of AI technologies.

Promoting International Cooperation

AI governance should be a global effort, with international cooperation and collaboration at its core. Encouraging knowledge sharing, harmonizing regulations, and facilitating cross-border collaboration can help address the challenges posed by AI on a global scale.

In conclusion, understanding AI and its potential threats is essential in staying safe in an AI-driven world. By protecting personal data, securing IoT devices, promoting awareness and education, considering ethical implications, monitoring AI systems, collaborating with experts, preparing for disruptions, and developing robust AI governance, individuals and societies can navigate the complexities of AI while maintaining privacy, security, and ethical standards.